Shoe Detection, Segmentation, and Stitching

Shoe Detection, Segmentation, and Stitching

A pipeline to automatically overlay retailer shoes onto user photos, helps users visualize how they would look in different retailer shoes. View on GitHub

Key Highlights

- Used YOLOv3 for shoe detection.

- Combined GrabCut with SIFT feature convex hull for segmentation.

- Stitched retailer shoes onto user images by applying similarity transformations based on shoe contours.

Objective

The goal of the pipeline is to automatically detect and segment shoes from user photos and then to overlay/stitch them with retailer shoes.

Methodology

The pipeline consists of three steps.

- Detection

- Segmentation

- Stitching

Detection

In the detection step, the bounding boxes of shoes in the user-provided photo are detected using YOLOv3 ( and ).

Segmentation

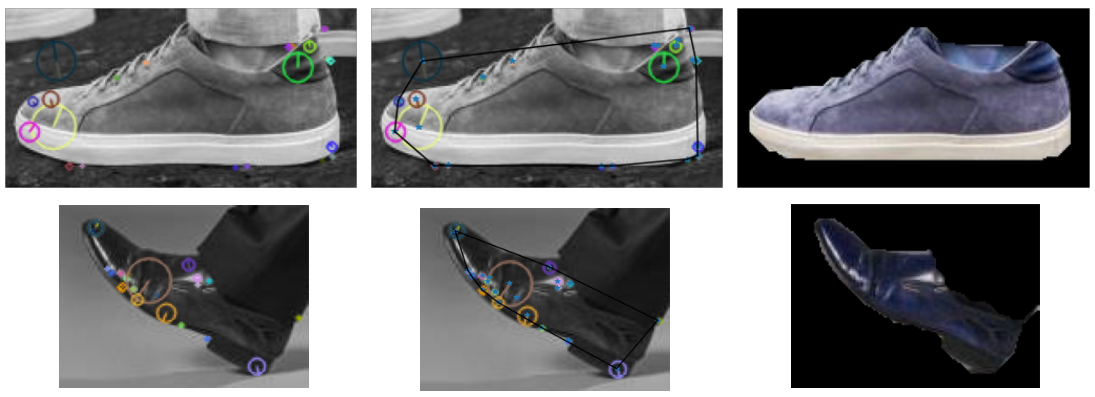

In the segmentation step, the GrabCut algorithm segments the shoe from the background. A convex hull of the SIFT features is provided to GrabCut as the probable foreground region ().

Stitching

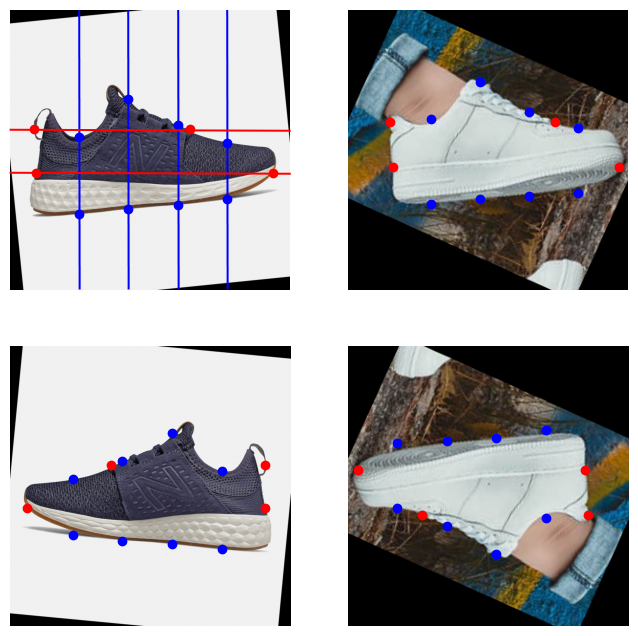

First, align the retailer shoes and the user’s shoes by using the segmentation masks’ principal eigenvector ().

After aligning the shoes by rotating, points from the shoes’ contour are sampled by slicing the shoes vertically and horizontally ().

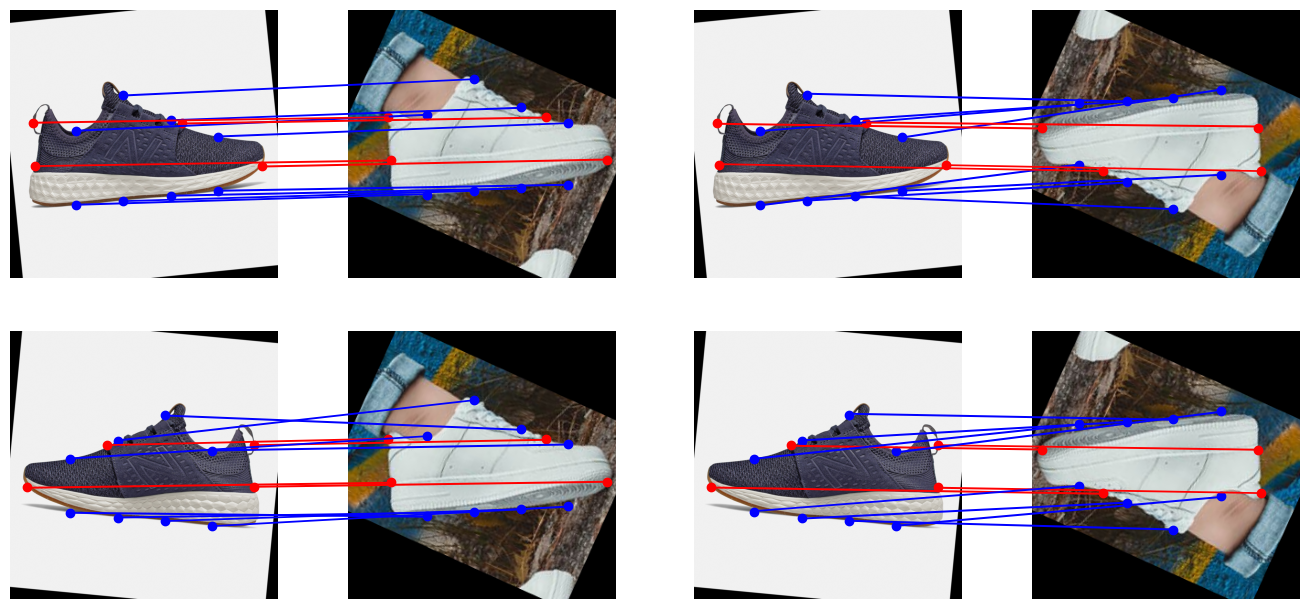

Using the sampled points from the shoes’ contour, a similarity transformation matrix is computed.

However, aligning the shoes using the principal eigenvector still doesn’t tell the direction in which the shoes are facing. Hence, the pipeline tries all possible combinations using left/right-facing shoes, and then it automatically chooses the best matching transformation ().

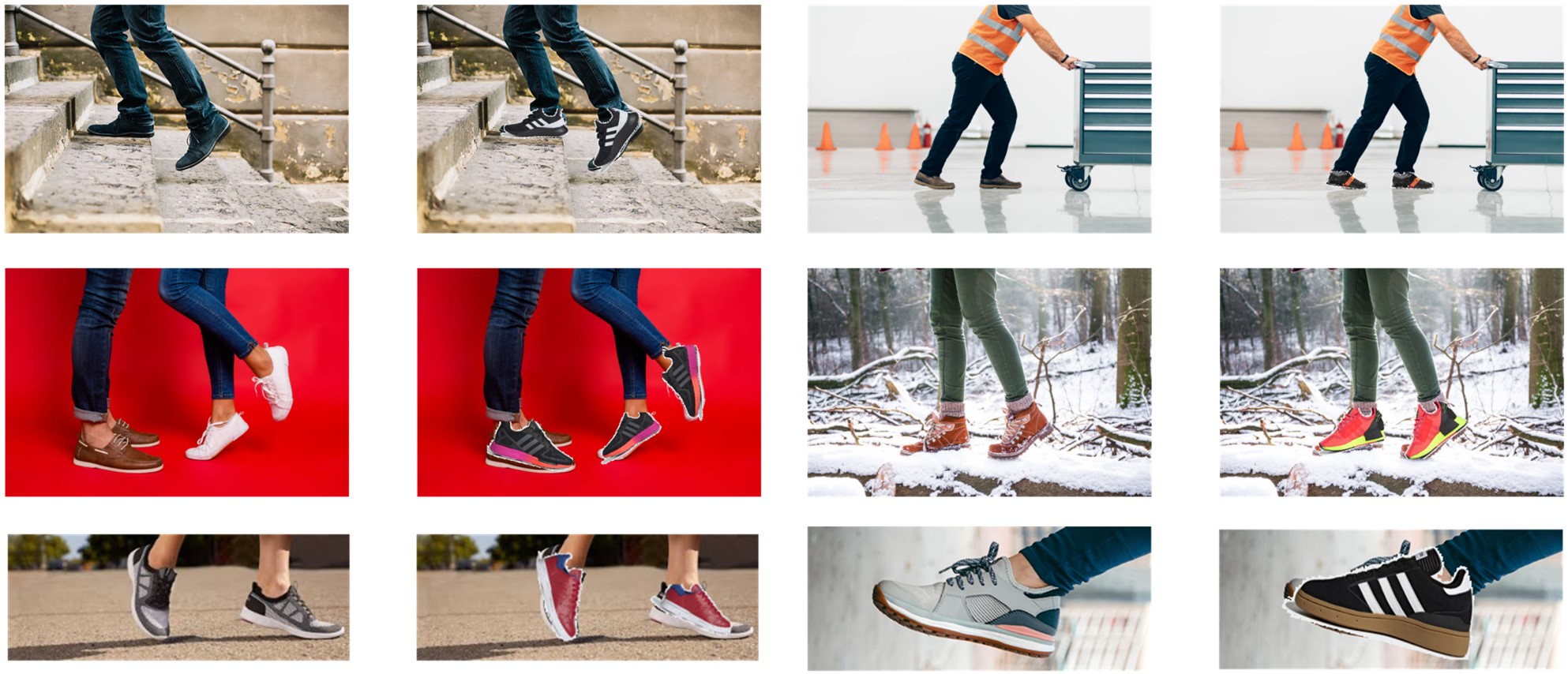

Finally, the best matching transformation is applied to the retailer shoe, which is then overlayed on the user’s shoe ().

Results

Limitations and Future Work

-

Combining the detection and segmentation stages into one, using a neural network to directly perform segmentation would improve performance and simplify the pipeline. Also, while YOLO is fast, it is not the best model to use when aiming for detection/segmentation accuracy.

-

The pipeline is limited to side-views of shoes, and the pipeline cannot handle soft-body objects that are more deformable, such as clothes. To handle novel-view synthesis for deformable objects, conditional diffusion models will need to be used (similar to OutfitAnyone).